Emotion Recognition in Multi-Party Conversations

This project uses shallow machine learning models with feature engineering to predict sentiment and emotion of multi-party conversations in the MELD dataset. Additionally, recurrent deep learning models are used to compare and benchmark accuracy and F1 score.

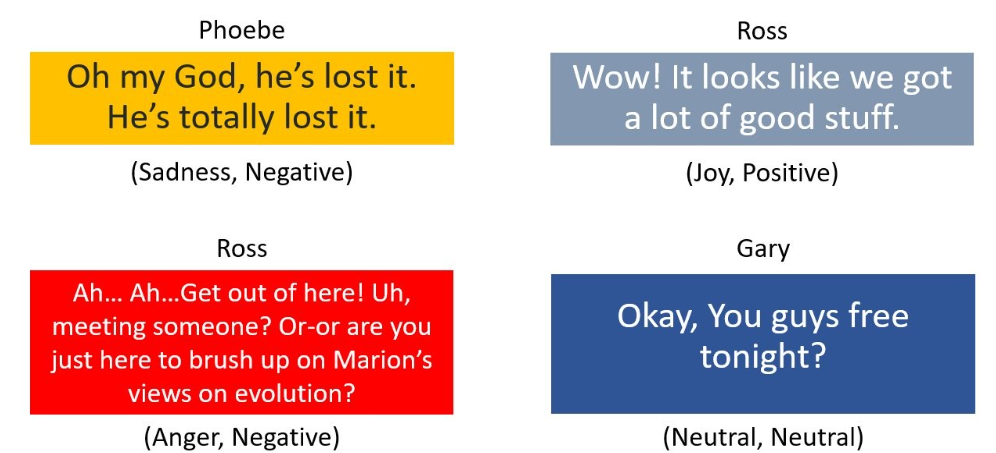

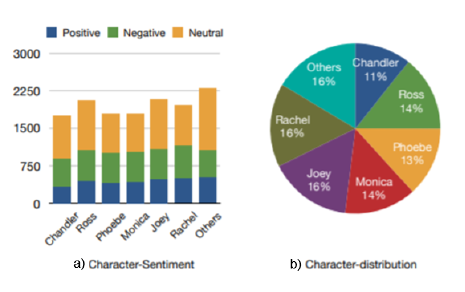

Multimodal Emotion Lines Dataset (MELD) comprises of 13708 utterances from 1433 dialogues from the TV series F.R.I.E.N.D.S. There are 7 unique emotions for each utterance, namely - Anger, Fear, Disgust, Joy, Neutral, Sadness and Surprise. There are also 3 unique sentiments - Positive, Neutral and Negative. The data distribution is as follows.

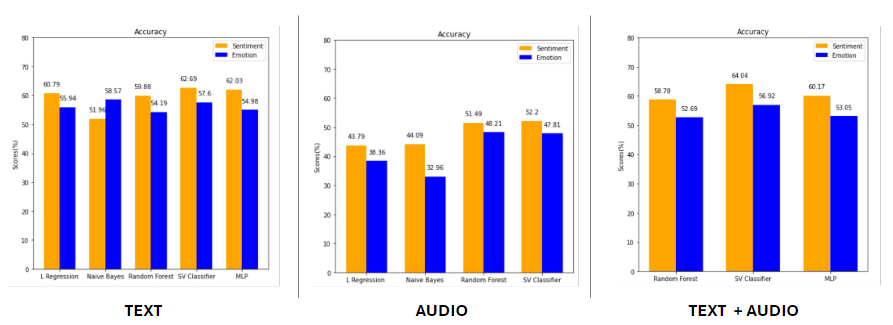

The performance of the models are compared when using text and audio modalities individually, and later combining both the text and audio features.